Every serious attempt to reduce emissions starts with the same question: how much CO2e are we actually producing?

The data needed to answer that question—emission factors—exists, and has existed for decades. The problem was never that the data was missing, but that none of it was compatible.

One source reports emissions per kilogram of material. Another uses tonnes. Some factors sit in sprawling Excel files with inconsistent headers. Others are buried in PDF annexes. To use data from multiple sources, you need to understand each source's quirks deeply enough to make them comparable, and unify them through a process called normalization. That kind of expertise takes years to develop, delaying reliable carbon reporting for a lot of organizations.

We built our emission factor database to change that. Every factor is available in a consistent unit, tagged with its source, publication year, geographic region, LCA boundaries, GHG Protocol scope, and IPCC assessment report version. The schema is the same whether the data came from a UK government spreadsheet, a French environmental agency report, or a global life cycle inventory database.

We recently passed one million factors in our database. But one million emission factors only means something if you can actually use them. Normalization makes that possible. Without it, collecting large amounts of emission factors is useless.

When data is easy to find and consistently structured, it can be used inside the tools where decisions are actually made: procurement platforms, financial models, sustainability dashboards. It stops sitting in a document and starts informing real choices. That’s what we wanted to make possible.

We haven’t reached 1m emission factors by adding every single thing we find—credibility matters much more to us than scale. Every factor in the Climatiq database carries an implicit promise: that it is scientifically sound, methodologically transparent, and legally clear to use. Keeping that promise requires processes that don't scale easily.

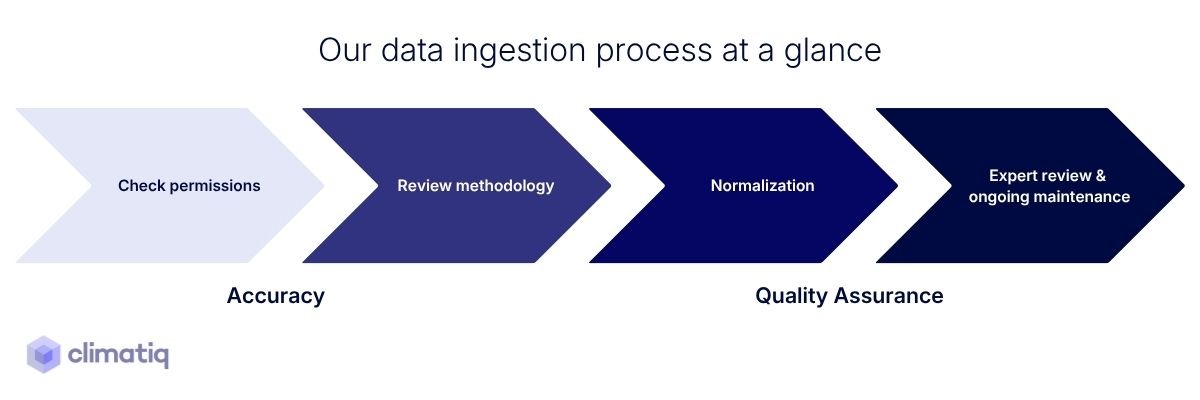

When we assess a new dataset, there are a few questions we ask:

A dataset that can't answer these questions doesn't make it in.

One of the principles we've held to from the beginning is that every factor in the database should be traceable. Not just to a source name, but to the specific dataset, the methodology document, the publication year, the geographic scope, and the assumptions embedded in how it was calculated. This is what makes it possible for a user to understand whether it's appropriate for their use case.

This commitment also shaped how we built the Data Explorer and source pages. Every dataset in the database has a dedicated source page that surfaces data quality, methodology notes, LCA boundary, licensing information, emission breakdowns, applicable standards, and direct links to the underlying sources. The goal is that any user can follow the thread from a single emission factor all the way back to where it came from. That transparency gives our data and calculations credibility.

For more info, check our Methodology Hub.

When we started building the emission factor database, we assumed the hardest part would be scale: finding and processing enough sources to matter. But as we got deeper into the work, our understanding of what "scale" actually meant kept expanding.

The first thing we learned was that the data ingestion process was far more time consuming than we expected. Early on this was largely manual work, and manual work doesn't scale. So we invested heavily in building an automated data pipeline that could handle ingestion more efficiently, which has become foundational to scale the ingestion process.

The second thing we learned was that the schema itself needed to grow. We started with a solid foundation, but as use cases multiplied, the labeling requirements deepened, for example to include scopes. Product carbon footprint calculations introduced the need to handle biogenic emissions separately. Each new reporting context revealed a dimension we hadn't previously anticipated.

The third thing we learned was that our customers' reporting needs were more granular than we had anticipated. Organizations needed regional specificity and factors that matched their operating context. And each new category we introduced came with its own calculation complexity and its own specific data requirements.

Normalization is harder than it looks. Sources differ not just in units, but in how they define system boundaries, how they handle upstream versus direct emissions, which greenhouse gases they include, and which IPCC assessment report version they use. A factor that looks comparable to another might be measuring something meaningfully different. Building a schema robust enough to capture these distinctions accurately required continuous iteration. The schema we have today is considerably richer than the one we started with, and it will keep evolving.

Licensing turned out to be a discipline of its own. As we expanded into premium datasets—IEA, ecoinvent, EXIOBASE, CEDA, and others—we encountered a landscape of usage rights. Some datasets permit broad commercial use. Others come with strict attribution requirements, restrictions on redistribution, or conditions that vary depending on how the data is used downstream. We dedicated real human capacity to this, because our users rely on us to ensure that the factors they use in their products and reports are legally clear. There is no automation shortcut here.

Scientific review remains human. Automation has been indispensable for validation checks, detecting outliers, and flagging inconsistencies. But verifying that an emission factor is scientifically sound requires reading the methodology document behind it, understanding the assumptions, and exercising judgment. That work sits with our team of scientists and carbon accounting experts. The same applies to source discovery. Tracking updates and identifying emerging data needs are all tasks that require expertise and judgment, not just processing power.

The arrival of AI-assisted data ingestion has made it easier than ever to build a large emission factor database quickly. That's not inherently a problem. But speed and scale without rigorous vetting is a problem, and we're starting to see it in the field.

The temptation is understandable. A large number looks credible and can signal coverage, investment, and seriousness. But emission factors are not web pages or product listings. A factor that hasn't been traced to a verified methodology, reviewed by someone who understands the science, or checked for consistency with comparable sources isn't a data point, it's a liability. And unlike an incorrect product description, an incorrect emission factor doesn't just mislead one user. It gets embedded into carbon accounting tools, feeds into corporate reports, informs procurement decisions, and shapes regulatory disclosures.

The field of carbon accounting is still building its credibility. The tools and data that underpin it need to earn trust. It needs factors it can stand behind. That distinction is easy to articulate but hard to operationalize, which is why it matters that a real human with real expertise is doing the verification work and can stand behind it.

A million is a large number. It's also, on its own, a meaningless one. The question that matters is what those million factors actually cover, and whether the organizations that need them can trust them.

Climatiq’s coverage is real, and reaching this scale means that sectors, geographies, and supply chain tiers that were previously out of reach are now covered. Whether it’s a manufacturer trying to understand the embedded emissions in a specific material sourced from different locations, or an organization reporting scope 3 emissions across a multi-tier supply chain: these use cases require breadth and specificity simultaneously. The large number of emission factors is a byproduct of doing the work correctly, and expanding coverage in response to what customers actually needed.

One million emission factors is a milestone worth marking, but it's not the finish line.

The work of building a database that carbon accounting can rely on never stops, because the science evolves, methodologies get updated, new industries come into scope, and the reporting requirements keep raising the bar.

What we've built is a foundation. The breadth is there. The rigor is there. The traceability is there. And we'll keep building on it. For example,we're expanding into product carbon footprint data, which requires deeper lifecycle inventory coverage and greater specificity at the material and process level. We're also extending into additional environmental indicators, such as FLAG emissions.

The throughline of everything we've described in this post is simple: carbon data that can't be trusted doesn't help anyone measure anything. It just adds noise to a problem. The value of a rigorous emission factor database isn't in the number, it's in what organizations can do with it, knowing that someone has already done the hard work to make sure it can be trusted.

That's what we set out to build. That's what we're continuing to build.